R&D: Unobtrusive Mood Recognition System

Philips Research, Eindhoven

Project Details

- Role: Lead Researcher & Primary Inventor

- Methods: Experimental Design, Behavioral Coding, Quantitative Analysis (SPSS), Academic Writing

- Outcome: Granted Patent, Published Paper (ACII 2011), and developed foundational algorithms for mood-sensing devices

the Challenge

Detecting Mood Without Wires

Philips Research wanted to explore the future of "Ambient Intelligence"—work environments that could detect a user's mood and adapt the atmosphere accordingly. The challenge was to determine the feasibility of a system that could recognize mood unobtrusively (i.e., without heart rate monitors or skin sensors). Existing studies were flawed: they relied on actors portraying exaggerated emotions for 2-3 seconds. My goal was to determine if we could detect mood based on natural body posture over longer periods (8 minutes) in a realistic environment.

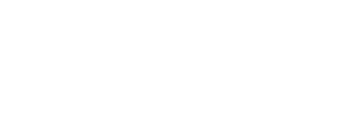

Statistically Significant Signals: Data visualization showing the breakdown of "Head Tapping" frequency. My analysis revealed this specific behavior was a unique identifier for "Positive High" mood states, forming the basis of our patent.

My role

Experimental Design & Behavioral Coding

I designed the research protocol to bridge the gap between psychological theory and engineering constraints.

- Metric Definition: Since this was a novel field, I had to determine what to measure. I developed and validated a rigorous coding system to track temporal changes across six postural dimensions (Head, Shoulders, Trunk, Arms, etc.).

- Behavioral Analysis: I analyzed video data of users under mood-inducing conditions, coding naturalistic displays of posture.

- Quantitative Validation: I used SPSS to analyze the frequency and duration of specific movements to find statistically significant correlations between posture and emotional state.

the outcome

A Patent on "Headbanging"

The research yielded a breakthrough discovery: specific head movements ("head tapping") were exclusively displayed during "Positive High" energy mood states, making the head the most reliable region for differentiation.

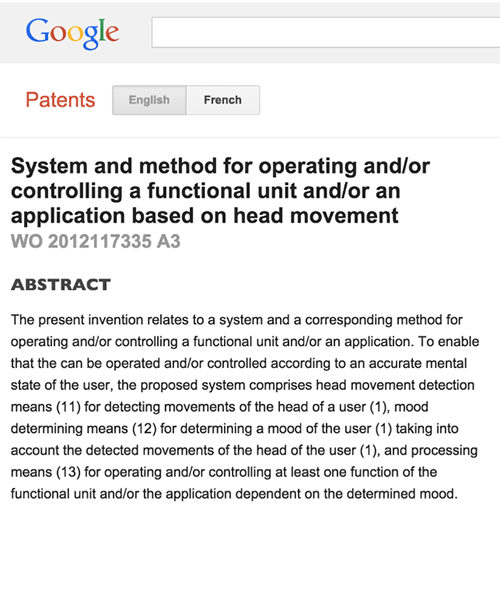

- Intellectual Property: I filed and was granted a Patent as the main inventor. This patent outlined the algorithmic rules for designing products that detect mood via head movement.

- Academic Recognition: The results were published in the Affective Computing and Intelligent Interactions conference (2011).

- Feasibility Confirmed: The study successfully proved that natural postures could be used to train powerful recognition algorithms for consumer electronics.

Mood Recognition Based on Upper Body Posture and Movement Features

Other Projects

Get in touch

If you are interested in working with me or would just like to say hello, please get in touch. Looking forward to hearing from you!

info[at]michellebrauer.net